A research preview of an AI system quietly did something that makes every CISO reconsider their threat model. Without a human directing its steps, this new model identified thousands of zero-day vulnerabilities across every major operating system and browser. Not in years. Not in months. In weeks.

That AI was Claude Mythos Preview. And while the security community has been debating AI’s role in defense, the offensive capability question just answered itself.

The question for security leaders is no longer whether AI will change the threat landscape. It already has. The question is: Are you using it before your adversary does?

“The window to act is not months. It’s now.”

The Mythos moment: What actually changed

To understand why this matters, you need to understand what made Mythos different from every automated security tool that came before it.

Traditional vulnerability scanning is mechanical. It pattern-matches. It checks known signatures against known weaknesses. Fuzzing tools throw random inputs at a target and watch for crashes. Static analysis tools parse code for common mistakes. These are powerful tools, but they are fundamentally reactive — they find what they’ve been taught to look for.

Mythos reasons. It chains observations together. It understands context. It identified a 27-year-old bug in OpenBSD — a vulnerability that had been sitting invisible in production code since 1997. Human security researchers had been looking at that codebase for nearly three decades. The AI found it in a fraction of the time.

This isn’t an incremental improvement in tooling. It’s a categorical shift in who finds vulnerabilities first — and how fast they do it.

And Mythos won’t remain unique for long. OpenAI, Google DeepMind, and other frontier labs are developing comparable capabilities. The democratization of AI-powered offensive tooling — “Spud” and its successors — means sophisticated exploit-chaining capability will not be gated by nation-state budgets for much longer.

The defender’s dilemma: Fighting yesterday’s war

Most enterprise security programs were designed for a world where attackers operate at human speed. Annual penetration tests. Quarterly vulnerability scans. Patches deployed on a monthly cycle. In that world, defenders had time.

That world no longer exists.

AI-powered attacks operate continuously. They don’t take weekends off. They don’t miss a vulnerability because the analyst was tired. They don’t need months to chain an exploit — they do it in hours. The gap between time-to-discover and time-to-exploit, which defenders have always relied upon to buy response time, is collapsing.

“The gap between time-to-discover and time-to-exploit, which defenders have always relied upon to buy response time, is collapsing.” -Abbas Kudrati

Consider the identity attack surface. As enterprises have scaled their agentic AI deployments, they’ve created millions of non-human identities — service accounts, API keys, OAuth tokens, CI/CD credentials. Many of these are over-privileged. Many are unmonitored. Many outlive the humans who created them. An AI adversary doesn’t need to brute-force your perimeter — it reasons its way through your identity estate, pivoting from one misconfigured credential to the next.

Ask yourself: If an AI agent began probing your environment tonight, how long before it found something? And how long before you knew?

If you can’t answer both parts of that question with confidence, you have a problem that no compliance framework will solve for you.

The APEX framework: Out-architecting the attacker

You cannot out-patch an AI-enabled attacker. The vulnerability surface is too large, the speed too great, and the reasoning capability too sophisticated. What you can do is out-architect it.

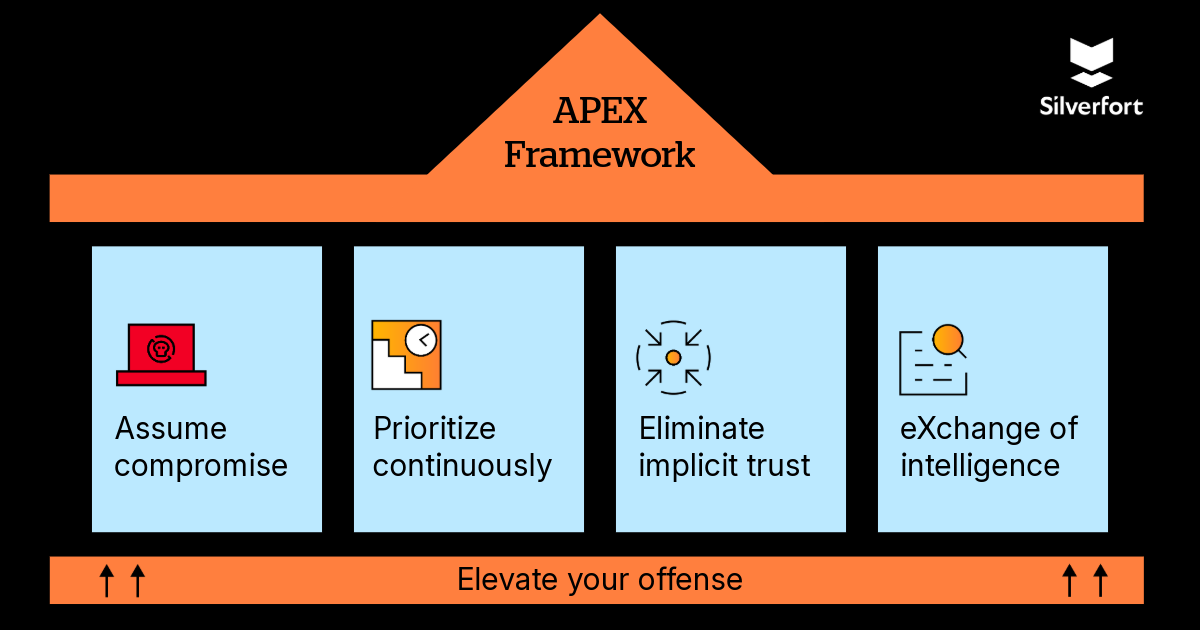

I’ve been developing a practical framework for exactly this challenge — APEX: AI-Powered Exposure eXchange. Five pillars, each actionable from Monday morning.

Assume compromise

Stop operating as though breach is a future hypothetical. AI-speed exploitation means your current posture may already be penetrated in ways your tooling hasn’t detected. Design your controls, your monitoring, and your response playbooks on the assumption that something is already wrong.

In practice, this means running threat hunts that begin from an assumption of active compromise rather than waiting for alerts to surface; deploying deception technologies — honeypots, decoy credentials, and canary service accounts — to catch AI-driven lateral movement early; and ensuring your SIEM (whether Microsoft Sentinel, Splunk, or an equivalent platform) is tuned for anomalous authentication patterns across both human and non-human identities, not just signature-based detections.

Bring your identity team into incident response from day one. Non-human identities — service accounts, API keys, OAuth tokens — are now the primary lateral movement vector for AI-speed attackers, yet most IR playbooks still treat them as an afterthought.

Platforms that provide continuous, real-time authentication monitoring across every identity give your SOC the visibility to detect AI-speed pivoting before it escalates. Start by asking a hard question: What would your current tooling actually catch if an AI agent began moving through your environment tonight?

Prioritize continuously

The annual pen test is dead as a primary assurance mechanism. Replace episodic testing with AI-assisted continuous vulnerability prioritization. You don’t need to find every vulnerability — you need to know which ones an AI attacker would chain first and close those gaps before they can.

For teams wondering where to start: look for solutions that score vulnerabilities by exploitability and blast radius — not just CVSS severity — and that update those scores continuously as your environment changes.

Cloud-Native Application Protection Platforms (CNAPPs) such as Wiz, Prisma Cloud, or Microsoft Defender for Cloud surface full attack paths across your cloud estate, showing exactly how an adversary would chain misconfigurations and vulnerabilities together rather than presenting a flat list of CVEs.

Pair these with an identity security layer: platforms like Silverfort provide continuous monitoring of authentication behavior across every identity — human and non-human — closing the critical gap between vulnerability discovery and identity-based exploitation.

An AI attacker doesn’t just exploit code flaws; it exploits identity misconfigurations and over-privileged credentials to move laterally once it’s in. The combination of attack-path visibility from your CNAPP and real-time identity control from your identity security platform is what separates organizations that scan from organizations that meaningfully reduce their exploitable risk surface.

Eliminate implicit trust

Every identity — human, non-human, and AI agent — must be re-verified at every step, every time. This is Zero Trust in its truest form. Not as a marketing term, but as an architectural principle enforced at the identity layer. If a service account is over-privileged, an AI attacker will find it. Your role is to guarantee that when they do find it, the service account has nothing useful to abuse.

eXchange intelligence

No single organization has the visibility to defend against AI-speed threats alone. The Glasswing coalition model — pre-competitive, sector-specific threat intelligence sharing — is the only viable path to collective defense. Join one. Or build one. The threat intelligence gap is now measured in minutes, not months.

I’ve experienced the power of this firsthand. Early in my tenure leading enterprise security strategy, I was part of a sector-wide threat intelligence sharing coalition when indicators of a coordinated credential-abuse campaign began appearing across member organizations’ environments.

The first institution to detect unusual service account authentication patterns — logins from unexpected geographies, access to sensitive APIs at 3am — shared those indicators of compromise with coalition members within hours. Three other organizations had blocked the relevant attack vectors before the adversary could pivot. No single organization had the full picture on its own. Together, we neutralized a campaign that, in isolation, each of us would have taken days or weeks to attribute and contain. That experience cemented my conviction: in the age of AI-speed threats, collective defense is not a strategic option — it is the baseline.

Elevate your offense

Use the same AI capabilities defensively. Deploy AI-powered red teams internally before external adversaries deploy them against you. The same reasoning capability that makes Mythos dangerous makes it invaluable as a defender’s tool. The organizations winning this race are the ones using AI offensively, safely, on their own environments, on their own schedule.

What good security looks like in the world of AI attacks

This is not a doom narrative. It’s an inflection point — and inflection points reward the organizations that move first.

The CISOs who will define the next decade of security architecture are already asking their teams different questions. Not “Are we patched?” but “What would an AI attacker target first?” Not “Do we have a pen test scheduled?” but “What does our continuous exposure surface look like right now?” Not “How many service accounts do we have?” but “How many of them does anyone actually own?”

The technology is not the barrier. The willingness to reframe the question is.

Mythos found a 27-year-old bug that had been invisible since 1997. AI didn’t create that vulnerability. But it changed who finds it first. Your job — our job — is to make sure defenders get there before attackers do.

Three actions for this quarter

- Commission an AI-assisted attack surface assessment. Understand what an AI attacker would see in your environment before one does.

- Audit every non-human identity. Service accounts, API keys, OAuth tokens — identify what’s over-privileged, what’s unmonitored, and what nobody owns.

- Join or form a sector-specific threat intelligence coalition. Collective defense at machine speed is the only viable answer to AI-speed threats.