TL;DR

Moltbot (formerly Clawdbot) is a newly-viral, open-source local AI agent that acts on behalf of its human operator’s identity, even when that human is offline. It is not really a bot at all, but an autonomous extension of a person, combining cloud-based reasoning with local execution. As it detaches from its human sender and continues to operate independently, it challenges how IAM teams distinguish between a user and the machine acting in their name, creating a hybrid identity.

A real-world example of what Moltbot can do

Imagine this scenario. A developer is not in the office. He is not logged in. He is not actively working. And yet, work is getting done on his behalf, under his identity. Code is written, committed to Git, pull requests are opened and approved, messages are sent, decisions are made. All of this traces back to instructions he gave earlier, but the reasoning, the prioritization, and the timing are no longer his. They belong to an AI agent running locally, acting autonomously, operating on behalf of his identity. From the organization’s perspective, nothing looks out of place. The same user account, the same access rights, the same tools. So, what is this? A piece of code, an automation, or an extension of the employee himself. That question explains why Moltbot has gone viral.

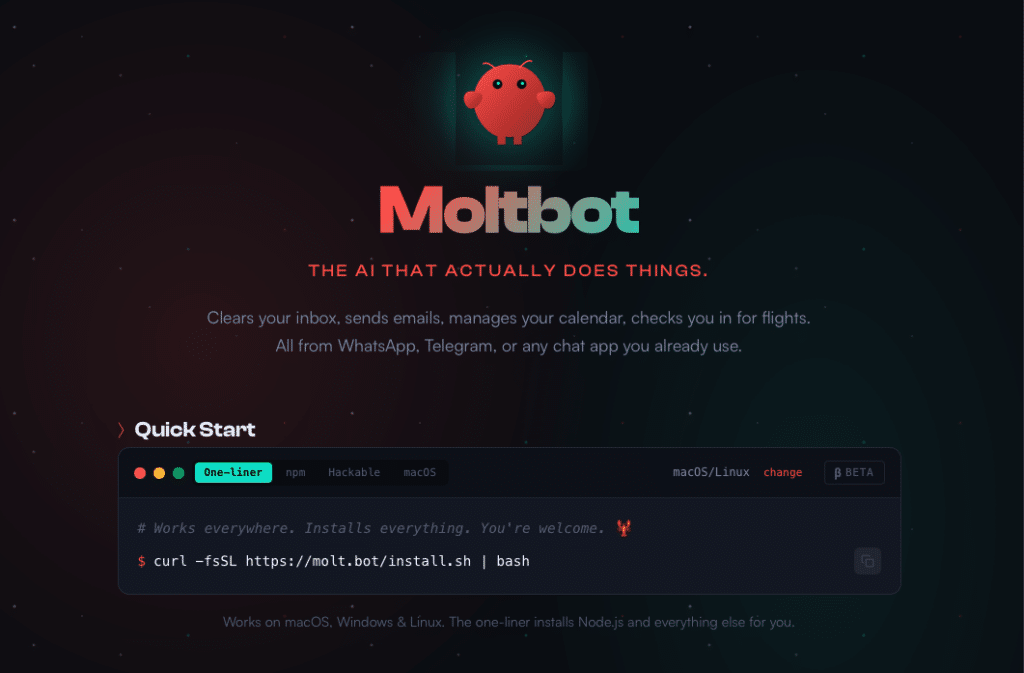

Moltbot is a new-ish, open-source local AI agent that first captured the attention of developers and quickly spread far beyond them. It is often called a “bot,” but that label understates what Moltbot can do. Bots respond. Moltbot plans, reasons, and acts. It connects to large language models in the cloud for intelligence, but executes locally, using real permissions, real files, and real identities. This places it firmly in the world of agentic AI, systems that do not just assist but pursue goals, adapt, and decide when to act. Moltbot reasons like a cloud service and behaves like a local user, without a central control plane. It’s a hybrid identity that started out with clear guidance and human intent, but easily drifted to a different place. Over time, it becomes partially detached from its sender. The human defines intent, but the agent owns execution.

Moltbot AI agent Identity Security implications

From a security and infrastructure perspective, this is deeply challenging. Moltbot is hard to control because it is local. There is no mandatory server component to monitor or shut down. Its reasoning depends on cloud-based models, creating a hybrid system that is neither fully on-device nor fully centralized. Its communication channels often run through encrypted, legitimate messaging apps like WhatsApp, Telegram, or Slack. These are trusted, allowed, and opaque by design, making true commands and prompts extremely difficult to intercept or inspect. To most security systems, Moltbot simply looks like normal user behavior.

Attackers immediately see the opportunity. One path is takeover. Gaining access to the agent’s communication channels, memory, or configuration means inheriting a trusted system with legitimate permissions. Another path is weaponization. An attacker can deploy a modified or malicious agent as a sleeper inside an organization. It can observe, learn workflows, understand timing, and decide when to act. It does not behave like classic malware. It behaves like a careful employee. Even without an attacker, an agent can drift. Over time, it may adapt and change behavior in ways its operator never intended.

The attack vectors are subtle. Prompt and instruction manipulation can trigger unintended actions because language directly translates into execution. Encrypted messaging platforms act as control planes that evade inspection. Local memory and token storage may expose sensitive context and credentials. Slow, adaptive behavior allows agents to blend in and avoid detection.

There is also another way to look at Moltbot. It is a blessing. It represents real progress in how individuals leverage AI. But if such agents appear on organizational endpoints, CISOs cannot rely on old assumptions. The right posture is trust, but verify, on steroids. That means surrounding agents with guardrails at the endpoint and identity level, focusing on behavior rather than just traffic, enforcing least privilege aggressively, and developing the ability to differentiate between human-driven actions and autonomous execution. Machines like Moltbot will increasingly act as extensions of their users, carrying human identity but operating with machine logic and speed. The organizations that succeed will be the ones that learn how to govern autonomy, rather than trying to eliminate it. Welcome to the new reality of hybrid identities—you are the sum of the agents you run (and, who knows… maybe some leftovers from the real you).

Learn more about identity-first AI Agent Security strategies by visiting us here.