TL;DR

In December 2025, a critical ServiceNow AI vulnerability enabled user impersonation and full workflow abuse. A static credential and weak identity binding let agents act on forged identities, including agent-to-agent trust abuse. This is an identity failure at runtime, and there are important lessons to learn from it. Thanks to ServiceNow for the rapid remediation and the transparency that allowed learning beyond the CVE.

What happened?

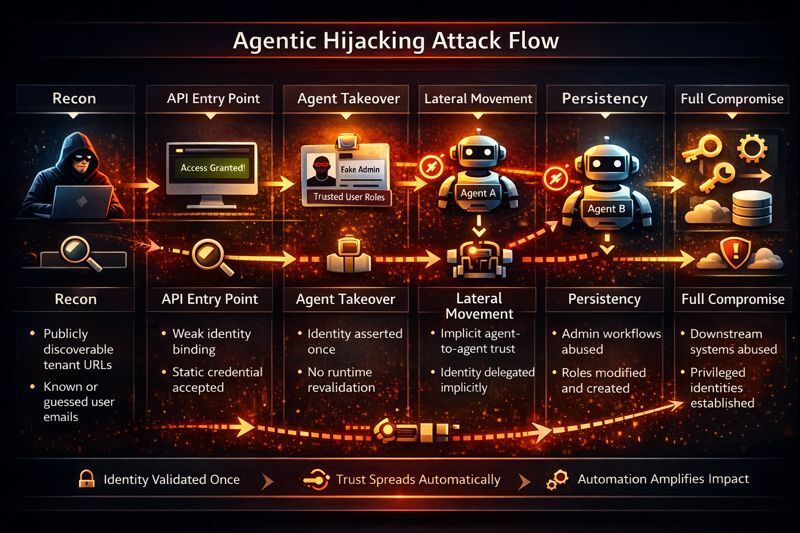

In late December 2025, security researchers disclosed a critical authentication and authorization vulnerability in ServiceNow’s AI platform, later tracked as CVE-2025-12420. The flaw affected the Virtual Agent API and Now Assist AI agents, and researchers documented that a potential attacker who successfully exploited and weaponized the issue could impersonate arbitrary users, including administrators, create privileged accounts, execute workflows, and effectively gain full control of a customer’s ServiceNow tenant, with the ability to pivot into connected enterprise systems. The vulnerability resulted from a combination of a static integration credential, insufficient identity verification at the API layer, and AI agents executing actions based on unverified identity context, bypassing traditional controls.

ServiceNow coordinated closely with the reporting researchers, issued patches and configuration changes in early January 2026, rotated embedded credentials, and communicated mitigation guidance directly to customers. There was no public evidence of widespread exploitation. The importance of this incident lies less in observed damage and more in what it revealed about how automation and identity now intersect in enterprise platforms.

A possible weaponizing scenario

An attacker identifies a customer’s ServiceNow tenant URL and obtains the email address of a privileged user. By exploiting the vulnerable Virtual Agent API, the attacker impersonates that user without authenticating, bypassing MFA and SSO entirely.

Using Now Assist AI agents, the attacker invokes automation to create a new administrative account and assign high-privilege roles. These actions are executed through approved workflows and logged as legitimate activity. The attacker then leverages ServiceNow integrations to reset credentials in a connected identity provider, provision access to cloud environments, or suppress alerts.

At this point, the vulnerability is fully weaponized. The attacker establishes persistent administrative control using business logic rather than exploits and moves laterally into downstream systems that trust ServiceNow as a control plane.

The identity of it: Runtime trust for agents

"What made this exposure consequential was not missing security controls, but where those controls stopped. Identity was verified once, at an entry point, and then treated as permanently trustworthy. After that moment, identity was no longer checked, revalidated, or questioned as actions were executed."

Roy Akerman, VP of Identity Security Strategy

That model breaks in agentic systems. Agents are long-lived, autonomous, and compositional. They operate over time, invoke other agents, and trigger workflows without human involvement. Each action extends trust further into the system, yet the identity behind those actions is never reasserted or reevaluated.

As a result, identity became a static label instead of a runtime control. Authority was enforced at the perimeter, while real power was exercised deep inside the execution flow. When the original identity assertion was wrong, every downstream decision inherited that mistake.

In an agentic environment, identity must travel with the action. Agents need identities of their own, clear limits on what they can do, and explicit rules for how authority is delegated between agents. Without runtime identity validation, automation does not just move faster. It moves blindly.

on-demand webinar

The next identity challenge: Securing AI agents

- Why AI agents are a new kind of identity

- How trust and governance factor into the equation

- Strategies to secure AI agents in your organization

Identity Security lessons

If you are in the workflow of building/securing/guardrailing agents, here is my take on the several identity and access control failure patterns surfaced with unusual clarity in the vulnerable way those agents and their credentials/trust were formed:

Impersonation without authentication

The platform accepted identity assertions based on weak identifiers rather than cryptographic proof. Email addresses functioned as identity tokens, bypassing session validation, MFA, and assurance levels entirely.

Privilege escalation plus persistence

Once impersonation succeeded, attackers did not need to exploit additional vulnerabilities. They used legitimate workflows to create new privileged accounts and modify roles, establishing persistence through authorized state changes rather than covert backdoors.

Business logic and workflow abuse

The exploit path relied on approved automation behaving exactly as designed. Provisioning flows, approvals, and configuration changes executed correctly, just under a false identity. This shifted the attack surface from code defects to trust assumptions embedded in business logic.

Agent-to-agent trust abuse

Agents delegated authority to other agents without revalidating identity or intent. This created a modern confused-deputy scenario where trust propagated transitively across agents, amplifying the impact of a single compromised identity context.

Second-order execution

Low-trust inputs triggered high-privilege actions indirectly through intermediary agents. The attack surface was not prompt or language manipulation, but execution context and delegated authority.

Detection and audit erosion

When the identity layer itself was compromised and attackers kept moving upstream, actions appeared to be legitimate user activity. As a result, traditional identity-based monitoring and behavioral analytics lost their fidelity. Together, these patterns demonstrate that agentic systems erode traditional security layering unless identity controls are enforced continuously, contextually, and at runtime. When identity is treated as an assumption rather than a control, automation becomes an accelerant for compromise rather than a force multiplier for security.

Conclusion: From misconfiguration to the next APT

This attack flow exposes a systemic failure in the design and execution of AI Agents rather than a single flaw. In the agentic interaction flow, identity was validated once and then assumed indefinitely. Trust propagated across agents and workflows without revalidation. Each decision looked reasonable in isolation, but together they formed an unsafe execution path. These are flow-level vulnerabilities, rooted in how authority moves through long lived, autonomous, and compositional systems.

The blind spot is not code quality, but trust continuity. Organizations must scan for unsafe trust paths, not just misconfigurations. IAM and security teams must assume these mistakes will happen and enforce runtime identity security so abuse is detected and stopped even when design assumptions fail. This is also a call to the attack validation community to model agentic attack paths and trust propagation failures.

Do not let the speed of AI adoption and the spirit of experimentation leave identity behind. As agentic systems move faster than traditional controls, identity security must expand into new territory: continuous, real time, and context-aware. The organizations that adapt identity to how work actually executes will be the ones who stay ahead of what comes next.

This is not the next generation of attacks. Attack groups are already incorporating scanning, challenge evasion, and exploitation of these faulty design patterns into their APT infrastructure and testing them against large enterprises.

Want to learn more about securing AI agents?

Explore how to unify discovery, risk assessment, and inline enforcement for AI-driven environments.

Resources

CVE-2025-12420

Official CVE record describing the ServiceNow authentication and authorization vulnerability.

https://nvd.nist.gov/vuln/detail/CVE-2025-12420

ServiceNow Security Advisory and Customer Notification (January 2026)

ServiceNow’s official disclosure and remediation guidance for the Virtual Agent and Now Assist AI vulnerability.

https://support.servicenow.com/kb?id=kb_article_view&sysparm_article=KB0XXXXXX

(Note: ServiceNow advisories are customer-accessible; exact KB IDs vary by instance.)

AppOmni Research: “BodySnatcher” Agentic AI Vulnerability in ServiceNow

Detailed third-party technical analysis of the vulnerability, exploitation paths, and agentic abuse patterns.

https://appomni.com/ao-labs/bodysnatcher-agentic-ai-security-vulnerability-in-servicenow/

Dark Reading

Independent reporting and expert commentary on the vulnerability and its implications for AI-driven SaaS platforms.

https://www.darkreading.com/remote-workforce/ai-vulnerability-servicenow

ThaiCERT Advisory on ServiceNow AI Vulnerability

National CERT analysis highlighting risk, affected components, and mitigation recommendations.

https://www.thaicert.or.th/en/2026/01/15/critical-ai-driven-vulnerability-discovered-in-servicenow-could-lead-to-full-system-compromise/